You are currently browsing jeff’s articles.

There was all this discussion about Steven Landsburg’s taxation example.

Nothing makes my job easier than a journalist who writes about something interesting and gets it 100% wrong.

Thanks, then, to Elizabeth Lesly Stevens for her column in yesterday’s Bay Citizen. Stevens wants to tax the “idle rich”, her Exhibit A being Robert Kendrick, heir to the $84 million Schlage Lock Company fortune. According to Ms. Stevens, Mr. Kendrick appears to do pretty much nothing but park and re-park his four cars all day long. Taxing people like Mr. Kendrick, she says, has to be part of any solution to America’s fiscal crisis.

Here’s what Ms. Stevens misses: Assuming the facts are as she states them, it is quite literally impossible to raise revenue by taxing the likes of Mr. Kendrick. We could argue about whether it’s desirable, but because it’s impossible, the discussion is moot.

The point being that once we look at the real economy, i.e. the allocation of goods and services and how that would be altered by taxing Mr. Kendrick, we see that since he is consuming nothing any increase in consumption by the goverment must be taking resources away from somebody else.

If that doesn’t persuade you then consider this. Suppose Kendrick puts all of his assets into a pile of cash and burns it. There is no affect on anybody’s consumption. (Assume he gets no consumption value from the bonfire.) If at the same time the governement prints an equal number of dollars and spends it, consumption allocations have been altered but not Mr. Kendrick’s. Whatever goods and services the government consumes must come from somebody other than he. Now observe that there is no difference at all between the scenario in which the money is burned by one party and printed by another and the scenario in which it is handed over directly through a tax.

Professor Landsburg makes a contribution by presenting these examples which force us to think carefully about concepts we normally take for granted. Indeed he is even willing to adopt the persona of a smug provocateur to get his point across, and we owe him our thanks for that sacrifice in service of the greater good.

On the other hand we should recognize that this exercise is really beside the point. The government certainly can raise revenue by taking Mr. Kendrick’s assets. The fact is that the dollar value of his assets are a claim on goods and services that will eventually be exercised by whomever inherits the assets. Taxing his assets today means taking those claims away from them. Moreover, the real allocation of resources will be altered in a way that is right in line with the spirit of the original columnist’s motivation. The government will consume more today, others will save more today. Those savers will consume more in the future and Mr. Kendrick’s windfall heirs will consume less.

A series of photos he took in the summer of 1949 as a journalist. Many of them are downright Kubrickian. He has a way with furniture.

That’s the famed Pump Room

That’s the famed Pump Room

Tyler raises the cash-grants versus Medicare question:

Nonetheless I propose a more modest version of the idea. When people turn a certain age, allow them to trade in the current benefits package for a minimalistic package (set broken limbs and offer lots of potent painkillers), plus some of the rest in cash, doled out over the years if need be. For some people, medical tourism will fill the gap.

Even if you believe that cash grants are a more efficient way to achieve whatever end Medicare does you should still be opposed to this idea. Because Medicare will never go away forever. You can “replace” it with cash grants but eventually people will notice again that old people want health care subsidies and then you will have both cash grants and Medicare.

Indeed, we already had cash grants in the form of Social Security when Medicare was introduced as a supplement to Social Security in 1965.

Your home is underwater but you can’t use that to keep your lawn green and the homeowner’s association is threatening to sue, what do you do? Paint it.

The grass spraying business took off here as the housing crisis escalated and real estate brokers were looking to quickly increase the curb appeal of abandoned properties on the cheap. A lawn painting, using a vegetable-based dye, can cost about $200. Vigorous homeowners’ associations, which can fine owners thousands of dollars if a dispute drags on, have also been good for business, said Klaus Lehmann of Turf-Painters Enterprise.

How could it be that millions of users sign on to a service like Twitter and voluntarily impose upon themselves a constraint to talk in no more than 140 character dollops at a time? Of course the answer is that they want access to the network, Twitter owns it and Twitter sets the rules. But then the question is why is a restriction like that the blueprint for a successful network.

Here’s an analogy. Imagine that you own a vacant lot where every weekend people meet to buy and sell stuff. You don’t charge any entry fees and you don’t take a cut from any transaction, you simply want to be the most popular vacant lot in town.

Every seller who is there selling stuff contributes value to the market as a whole and you internalize that overall value. But you and the sellers have a basic conflict of interest because they are maximizing their own profits and not the overall value of being in the market. When they raise prices they extract surplus from the people willing to pay high prices and in the process reduce the surplus of people who don’t.

From your point of view the extracted surplus is just a transfer of value from one member of your club to another, and what you really care about is the lost value from the excluded sales. So you will generally want lower prices than the sellers would set on their own.

Now think of each message in a social network as having two components: information and self-promotion. People follow you if you provide them with useful information. And if your information is useful some would even be willing to wade through some self-promotion to get to your useful information. But not all. The self-promotion is the price of the information and its a transfer of value because it costs the follower his attention and enhances the followee’s reputation.

From Twitter’s point of view the users are a bunch of tiny monopolists willing to exchange a little bit of overall surplus for a bit more of their own. 140 is like a price cap imposed by the owner of the vacant lot which boosts the information/self-promotion ratio of tweets.

The surprising thing is that some users aren’t just outright banned.

It’s almost as though the implosion was manufactured for the sole purpose of creating fodder for commentary.

Most of the inaccurate information about me on the Internet is harmless. And negative opinions about the quality of my work are always legitimate. The trouble starts when advocates for one cause or another use me as a whipping boy to promote their agendas. As I mentioned, the way that works is that they take out of context something I’ve written, paraphrase it incorrectly, and market me as a perfect example of the thought-criminal that they’ve been warning everyone about. I don’t think any of this is an organized conspiracy. I think it’s a combination of zealotry, bad reading comprehension, opportunism, and some herd behavior.

[If you’re new to this, the paragraph above is the part that will be taken out of context and paraphrased to show that I’m paranoid and delusional, claiming that organized groups are out to get me.]

The full article is likely to be your best read of the week.

From Doktor Frank, quoting Hemingway, or Roald Dahl, or somebody.

The best way is always to stop when you are going good and when you know what will happen next. If you do that every day you will never be stuck. Always stop while you are going good and don’t think about it or worry about it until you start to write the next day. That way your subconscious will work on it all the time. But if you think about it consciously or worry about it you will kill it and your brain will be tired before you start.

Outstanding advice, but there is a tradeoff. Being on a roll means being connected to what you are writing right now. And that connection can lapse after time passes, even just overnight. Material which was gold last night can turn in to lead the next morning. A better bridge is to conclude the session with rapid, sketchy, outliny writing and start the next day by fleshing out the overhang to re-establish the flow.

This is the third and final post on ticket pricing motivated by the new restaurant Next in Chicago and proprietors Grant Achatz and Nick Kokonas new ticket policy. In the previous two installments I tried to use standard mechanism design theory to see what comes out when you feed in some non-standard pricing motives having to do with enhancing “consumer value.” The two attempts that most naturally come to mind yielded insights but not a useful pricing system. Today the third time is the charm.

Things start to come in to place when we pay close attention to this part of Nick’s comment to us:

we never want to invert the value proposition so that customers are paying a premium that is disproportionate to the amount of food / quality of service they receive.

I propose to formalize this as follows. From the restaurant’s point of view, consumer surplus is valuable but some consumers are prepared to bid even more than the true value of the service they will get. The restaurant doesn’t count these skyscraping bids as actually reflecting consumer surplus and they don’t want to tailor their mechanism to cater to them. In particular, the restaurant distinguishes willingness to pay from “value.”

I can think of a number of sensible reasons they would take this view. They might know that many patrons overestimate the value of a seating at Next. Indeed the restaurant might worry that high prices by themselves artificially inflate willingness to pay. They don’t want a bubble. And they worry about their reputation if someone pays $1700 for a ticket, gets only $1000 worth of value and publicly gripes. Finally they might just honestly believe that willingness to pay is a poor measure of welfare especially when comparing high versus low.

Whatever the reason, let’s run with it. Let’s define to be the value, as the restaurant perceives it, that would be realized by service to a patron whose willingness to pay is

. One natural example would be

where is some prespecified “cap.” It would be like saying that nobody, no matter how much they say they are willing to pay, really gets a value larger than, say

from eating at Next.

Now let’s consider the optimal pricing mechanism for a restaurant that maximizes a weighted sum of profit and consumer’s surplus, where now consumer’s surplus is measured as the difference between and whatever price is paid. The weight on profit is

and the weight on consumer surplus is

. After you integrate by parts you now get the following formula for virtual surplus.

And now we have something! Because if is between

and

then the first term is increasing in

(up to the cap

) and the second term is decreasing. For

close enough to

, the overall virtual surplus is going to be first increasing and then decreasing. And that means that the optimal mechanism is something new. When bids are in the low to moderate range, you use an auction to decide who gets served. But above some level, high bidders don’t get any further advantage and they are all lumped together.

The optimal mechanism is a hybrid between an auction and a lottery. It has no reserve price (over and above the cost of service) so there are never empty seats. It earns profits but eschews exorbitant prices.

It has clear advantages over a fixed price. A fixed price is a blunt instrument that has to serve two conflicting purposes. It has to be high enough to earn sufficient revenue on dates when demand is high enough to support it, but it can’t be too high that it leads to empty seats on dates when demand is lower. An auction with rationing at the top is flexible enough to deal with both tasks independently. When demand is high the fixed price (and rationing) is in effect. When demand is low the auction takes care of adjusting the price downward to keep the restaurant full. The revenue-enhancing effects of low prices is an under-appreciated benefit of an auction. Finally, it’s an efficient allocation system for the middle range of prices so scalping motivations are reduced compared to a fixed price.

Incentives for scalping are not eliminated altogether because of the rationing at the top. This can be dealt with by controlling the resale market. Indeed here is one clear message that comes out of all of this. Whatever motivation the restaurant has for rationing sales, it is never optimal to allow unfettered resale of tickets. That only undermines what you were trying to achieve. Now Grant Achatz and Nick Kokonas understand that but they are forced to condone the Craigslist market because by law non-refundable tickets must be freely transferrable.

But the cure is worse than the disease. In fact refundable tickets are your friend. The reason someone wants to return their ticket for a refund is that their willingness to pay has dropped below the price. But there is somebody else with a willingness to pay that is above the price. We know this for sure because tickets are being rationed at that price. Granting the refund allows the restaurant to immediately re-sell it to the next guy waiting in line. Indeed, a hosted resale market would enable the restaurant to ensure that such transactions take place instantaneously through an automated system according to the same terms under which tickets were originally sold.

Someone ought to try this.

A meditation on tipping in Australia versus the United States.

And Manhattan is really cool these days. Especially with the Aussie kicking seven kinds of Chinese tripe out of the greenback. But that rest room, it was a marvel. If it was a person I’d say it’d been scrubbed until its bellybutton shined. The mountain of crisp, white, freshly laundered hand towels never got any smaller despite the constant stream of punters using and discarding them. The wash basin, gleaming and shining, fairly groaned under the weight of the vast selection of cleansing gels, moisturizers, and other masculine hygiene products with which I must profess myself completely unfamiliar. Not one stray, errant drop marked the floor of this restroom. Nary a single pubic hair had escaped to run wild on the immaculate tiling. And it was all thanks to the dude from Senegal who was doing it for minimum wage and tips.

It seems that the toilets are not so clean in Oz. And tipping, evidently an American import, hasn’t exactly captured the imagination down under. The comments following the article are especially entertaining.

Restaurants, touring musicians, and sports franchises are not out to gouge every last penny out of their patrons. They want patrons to enjoy their craft but also to come away feeling like they didn’t pay an arm and a leg. Yesterday I tried to formalize this motivation as maximizing consumer surplus but that didn’t give a useful answer. Maximizing consumer surplus means either complete rationing (and zero profit) or going all the way to an auction (a more general argument why appears below.) So today I will try something different.

Presumably the restaurant cares about profits too. So it makes sense to study the mechanism that maximizes a weighted sum of profits and consumer’s surplus. We can do that. Standard optimal mechanism design proceeds by a sequence of mathematical tricks to derive a measure of a consumer’s value called virtual surplus. Virtual surplus allows you to treat any selling mechanism you can imagine as if it worked like this

- Consumers submit “bids”

- Based on the bids received the seller computes the virtual surplus of each consumer.

- The consumer with the highest virtual surplus is served.

If you write down the optimal mechanism design problem where the seller puts weight on profits and weight

on consumer surplus, and you do all the integration by parts, you get this formula for virtual surplus.

where is the consumer’s willingness to pay,

is the proportion of consumers with willingness to pay less than

and

is the corresponding probability density function. That last ratio is called the (inverse) hazard rate.

As usual, just staring down this formula tells you just about everything you want to know about how to design the pricing system. One very important thing to know is what to do when virtual surplus is a decreasing function of . If we have a decreasing virtual surplus then we learn that it’s at least as important to serve the low valuation buyers as those with high valuations (see point 3 above.)

But here’s a key observation: its impossible to sell to low valuation buyers and not also to high valuation buyers because whatever price the former will agree to pay the latter will pay too. So a decreasing virtual surplus means that you do the next best thing: you treat high and low value types the same. This is how rationing becomes part of an optimal mechanism.

For example, suppose the weight on profit is equal to

. That brings us back to yesterday’s problem of just maximizing consumer surplus. And our formula now tells us why complete rationing is optimal because it tells us that virtual surplus is just equal to the hazard rate which is typically monotonically decreasing. Intuitively here’s what the virtual surplus is telling us when we are trying to maximize consumer surplus. If we are faced with two bidders and one has a higher valuation than the other, then to try to discriminate would require that we set a price in between the two. That’s too costly for us because it would cut into the consumer surplus of the eventual winner.

So that’s how we get the answer I discussed yesterday. Before going on I would like to elaborate on yesterday’s post based on correspondence I had with a few commenters, especially David Miller and Kane Sweeney. Their comments highlight two assumptions that are used to get the rationing conclusion: monotone hazard rate, and no payments to non-buyers. It gets a little more technical than usual so I am going to put it here in an addendum to yesterday (scroll down for the addendum.)

Now back to the general case we are looking at today, we can consider other values of

An important benchmark case is when virtual surplus reduces to just

, now monotonically increasing. That says that a seller who puts equal weight on profits and consumer surplus will always allocate to the highest bidder because his virtual surplus is higher. An auction does the job, in fact a second price auction is optimal. The seller is implementing the efficient outcome.

More interesting is when is between

and

. In general then the shape of the virtual surplus will depend on the distribution

, but the general tendency will be toward either complete rationing or an efficient auction. To illustrate, suppose that willingness to pay is distributed uniformly from

to

. Then virtual suplus reduces to

which is either decreasing over the whole range of (when

), implying complete rationing or increasing over the whole range (when

), prescribing an auction.

Finally when virtual surplus is the difference between an increasing function and a decreasing function and so it is increasing over the whole range and this means that an auction is optimal (now typically with a reserve price above cost so that in return for higher profits the restaurant lives with empty tables and inefficiency. This is not something any restaurant would choose if it can at all avoid it.)

What do we conclude from this? Maximizing a weighted sum of consumer surplus and profit yields again yields one of two possible mechanisms: complete rationing or an auction. Neither of these mechanisms seem to fit what Nick Kokonas was looking for in his comment to us and so we have to go back to the drawing board again.

Tomorrow I will take a closer look and extract a more refined version of Nick’s objective that will in fact produce a new kind of mechanism that may just fit the bill.

Addendum: Check out these related papers by Bulow and Klemperer (dcd: glen weyl) and by Daniele Condorelli.

Complaining about TSA screening is considered by the TSA to be cause for additional scrutiny.

Agent Jose Melendez-Perez told the 9/11 commission that Mohammed al-Qahtani “became visibly upset” and arrogantly pointed his finger in the agent’s face when asked why he did not have an airline ticket for a return flight.

But some experts say terrorists are much more likely to avoid confrontations with authorities, saying an al Qaeda training manual instructs members to blend in.

“I think the idea that they would try to draw attention to themselves by being arrogant at airport security, it fails the common sense test,” said CNN National Security Analyst Peter Bergen. “And it also fails what we know about their behaviors in the past.”

Last week, in response to our proposal for how to run a ticket market, Nick Kokonas of Next Restaurant wrote something interesting.

Simply, we never want to invert the value proposition so that customers are paying a premium that is disproportionate to the amount of food / quality of service they receive. Right now we have it as a great bargain for those who can buy tickets. Ideally, we keep it a great value and stay full.

Economists are not used to that kind of thinking and certainly not accustomed to putting such objectives into our models, but we should. Many sellers share Nick’s view and the economist’s job is to show the best way to achieve a principal’s objective, whatever it may be. We certainly have the tools to do it.

Here’s an interesting observation to start with. Suppose that we interpret Nick as wanting to maximize consumer surplus. What pricing mechanism does that? A fixed price has the advantage of giving high consumer surplus when willingness to pay is high. The key disadvantage is rationing: a fixed price has no way of ensuring that the guy with a high value and therefore high consumer surplus gets served ahead of a guy with a low value.

By contrast an auction always serves the guy with the highest value and that translates to higher consumer surplus at any given price. But the competition of an auction will lead to higher prices. So which effect dominates?

Here’s a little example. Suppose you have two bidders and each has a willingness to pay that is distributed according the uniform distribution on the interval . Let’s net out the cost of service and hence take that to be zero.

If you use a rationing system, each bidder has a 50-50 chance of winning and paying nothing (i.e. paying the cost of service.) So a bidder whose value for service is will have expected consumer surplus equal to

.

If instead you use an auction, what happens? First, the highest bidder will win so that a bidder with value wins with probability

. (That’s just the probability that his opponent had a lower value.) For bidders with high values that is going to be higher than the 50-50 probability from the rationing system. That’s the benefit of an auction.

However he is going to have to pay for it and his expected payment is . (The simplest way to see this is to consider a second-price auction where he pays his opponent’s bid. His opponent has a dominant strategy to bid his value, and with the uniform distribution that value will be

on average conditional on being below

.) So his consumer surplus is only

because when he wins his surplus is his value minus his expected payment , and he wins with probability

.

So in this example we see that, from the point of view of consumer surplus, the benefits of the efficiency of an auction are more than offset by the cost of higher prices. But this is just one example and an auction is just one of many ways we could think of improving upon rationing.

However, it turns out that the best mechanism for maximizing consumer surplus is always complete rationing (I will prove this as a part of a more general demonstration tomorrow.) Set price equal to marginal cost and use a lottery (or a queue) to allocate among those willing to pay the price. (I assume that the restaurant is not going to just give away money.)

What this tells us is that maximizing consumer surplus can’t be what Nick Kokonas wants. Because with the consumer surplus maximizing mechanism, the restaurant just breaks even. And in this analysis we are leaving out all of the usual problems with rationing such as scalping, encouraging bidders with near-zero willingness to pay to submit bids, etc.

So tomorrow I will take a second stab at the question in search of a good theory of pricing that takes into account the “value proposition” motivation.

Addendum: I received comments from David Miller and Kane Sweeney that will allow me to elaborate on some details. It gets a little more technical than the rest of these posts so you might want to skip over this if you are not acquainted with the theory.

David Miller reminded me of a very interesting paper by Ran Shao and Lin Zhou. (See also this related paper by the same authors.) They demonstrate a mechanism that achieves a higher consumer surplus than the complete rationing mechanism and indeed that achieves the highest consumer surplus among all dominant-strategy, individually rational mechanisms.

Before going into the details of their mechanism let me point out the difference between the question I am posing and the one they answer. In formal terms I am imposing an additional constraint, namely that the restaurant will not give money to any consumer who does not obtain a ticket. The restaurant can give tickets away but it won’t write a check to those not lucky enough to get freebies. This is the right restriction for the restaurant application for two reasons. First if the restaurant wants to maximize consumer surplus its because it wants to make people happy about the food they eat, not happy about walking away with no food but a payday. Second, as a practical matter a mechanism that gives away money is just going to attract non-serious bidders who are looking for a handout.

In fact Shao and Zhou are starting from a related but conceptually different motivation: the classical problem of bilateral trade between two agents. In the most natural interpretation of their model the two bidders are really two agents negotiating the sale of an object that one of them already owns. Then it makes sense for one of the agents to walk away with no “ticket” but a paycheck. It means that he sold the object to the other guy.

Ok with all that background here is their mechanism in its simplest form. Agent 1 is provisionally allocated the ticket (so he becomes the seller in the bilateral negotiation.) Agent 2 is given the option to buy from agent 1 at a fixed price. If his value is above that price he buys and pays to agent 1. Otherwise agent 1 keeps the ticket and no money changes hands. (David in his comment described a symmetric version of the mechanism which you can think of as representing a random choice of who will be provisionally allocated the ticket. In our correspondence we figured out that the payment scheme for the symmetric version should be a little different, it’s an exercise to figure out how. But I didn’t let him edit his comment. Ha Ha Ha!!!)

In the uniform case the price should be set at 50 cents and this gives a total surplus of 5/8, outperforming complete rationing. Its instructive to understand how this is accomplished. As I pointed out, an auction takes away consumer surplus from high-valuation types. But in the Shao-Zhou framework there is an upside to this. Because the money extracted will be used to pay off the other agent, raising his consumer surplus. So you want to at least use some auction elements in the mechanism.

One common theme in my analysis and theirs is in fact a deep and under-appreciated result. You never want to “burn money.” Using an auction is worse than complete rationing because the screening benefits of pricing is outweighed by the surplus lost due to the payments to the seller. Using the Shao-Zhou mechanism is optimal precisely becuase it finds a clever way to redirect those payments so no money is burned. By the way this is also an important theme in David Miller’s work on dynamic mechanisms. See here and here.

Finally, we can verify that the Shao-Zhou mechanism would no longer be optimal if we adapted it to satisfy the constraint that the loser doesnt receive any money. It’s easy to do this based on the revenue equivalence theorem. In the Shao-Zhou mechanism an agent with zero value gets expected utility equal to 1/8 due to the payments he receives. We can subtract utility of 1/8 from all types and obtain an incentive-compatible mechanism with the same allocation rule. This would be just enough to satisfy my constraint. And then the total surplus will be 5/8-2/8 = 3/8 which is less than the 1/2 of the complete rationing mechanism. That’s another expression of the losses associated with using even the very crude screening in the Shao-Zhou mechanism.

Next let me tell you about my correspondence with Kane Sweeney. He constructed a simple example where an auction outperforms rationing. It works like this. Suppose that each bidder either had a very low willingness to pay, say 50 cents, or a very high willingness to pay, say $1,000. If you ration then expected surplus is about $500. Instead you could do the following. Run a second-price auction with the following modification to the rules. If both bid $1000 then toss a coin and give the ticket to the winner at a price of $1. This mechanism gives an expected surplus of about $750.

Basically this type of example shows that the monotone hazard rate assumption is important for the superiority of rationing. To see this, suppose that we smooth out the distribution of values so that types between 50 cents and $1000 have very small positive probability. Then the hazard rate is first increasing around 50 cents and then decreasing from 50 cents all the way to $1000. So you want to pool all the types above 50 cents but you want to screen out the 50-cent types. That’s what Kane’s mechanism is doing.

I would interpret Kane’s mechanism as delivering a slightly nuanced version of the rationing message. You want to screen out the non-serious bidders but ration among all of the serious bidders.

- First world problems.

- Already seen the video of the twin toddlers rapping in their diapers? Have you seen this remake?

- What’s better than chocolate on Valentine’s Day?

- Charlie Crist apologizes to David Byrne.

- Vibrating strings and person.

We are reading it in my Behavioral Economics class and so far we have finished the first 5 chapters which make up Part I of the book “Anticipating Future Preferences.” In Ran Spiegler’s typical style, perfectly crafted simple models are used to illustrate deep ideas that lie at the heart of existing frontier research and, no doubt, future research this book is bound to inspire.

A nod also has to go to Kfir Eliaz who is Rani’s longtime collaborator on many of the papers that preceded this book. Indeed, in a better world they would form a band. It would be a early ’90s geek-rock band like They Might Be Giants or whichever band it was that did The Sweater Song. I hereby name their band Hasty Belgium. (Names of other bands here.)

Many of the examples in the book are referred to as “close variations of” or “free variations of” papers in the literature. And Rani has even written a paper that he calls “a cover version of” a paper by Heidhues and Koszegi. So to continue the metaphor, I offer here some liner notes for the book.

In chapter 5 there is a fantastic distillation of a model due to Michael Grubb that explains Netflix pricing. Conventional models of price discrimination cannot explain three-part tariffs: a membership fee, a low initial per-unit price, and then a high per-unit price that kicks in above some threshold quantity. (Netflix is the extreme case where the initial price per movie is zero, and above some number the price is infinite.) Rani constructs the simplest and clearest possible model to show how such a pricing system is the optimal way to take advantage of consumers who are over-confident in their beliefs about their future demand.

A conventional approach to pricing would be to set price equal to marginal cost, thereby incentivizing the consumer to demand the efficient quantity, and then adding on a membership fee that extracts all of his surplus. You can think of this as the Blockbuster model. The Netflix model by contrast reduces the per-unit price to zero (up to some monthly allotment) but raises the membership fee.

Here’s how that increases profits. Many of us mistakenly think we will watch lots of movies. Netflix re-arranges the pricing structure so that the total amount we expect to pay when we watch all of those movies is the same as in the Blockbuster model. Just now we are paying it all in the form of a membership fee. If it turns out that we watch as many movies as we anticipated, we are no better or worse off and neither is Netflix.

But in fact most of us discover that we are always too busy to watch movies. In the Blockbuster system when that happens we don’t watch movies and so we don’t pay per-unit prices and we Blockbuster doesn’t make much money. In the Netflix system it doesn’t matter how many movies we watch, because we already paid.

My only complaint about the book is the title. (Not for those reasons, no.) The term “Bounded Rationality” has fallen out of favor and for good reason. It’s pejorative and it doesn’t really mean anything. A more contemporary title would have been Behavioral Industrial Organization. Now I agree that “Behavioral” is at least as meaningless as “Bounded Rationality.” Indeed it has even less meaning. But that’s a virtue because we don’t have any good word for whatever “Bounded Rationality” and “Behavioral” are supposed to mean. So I prefer a word that has no meaning at all than “Bounded Rationality” which suggests a meaning that is misplaced.

Analogous to “doctor shopping,” children practice parent shopping. My son comes to me and asks if he can play his computer game. When I say no, he goes and asks his mother. That is, assuming he hasn’t already asked her. After all how can I know that I’m not his second chance?

Indeed, if she is in another room and I have to make an immediate decision I should assume a certain positive probability that he has already approached her and she said no. Assuming that my wife had good reason to say no that inference alone gives me a stronger reason to say no than I already had. How much stronger?

If its an activity where he has learned from past experience that I am less willing to agree to, then for sure he asked his mother first and she said no. It’s no wonder I am the tough guy when it comes to those activities.

If its an activity where I am more lenient he’s going to come to me first for sure. But his strategic behavior still influences my answer. I know that if I say no, he’s going to her next and she’s going to reason exactly as in the previous paragraph. So she’s going to be tougher. Now sometimes I say no because I am really close to being on the fence and it makes sense to defer the decision to his Mother. Saying no effectively defers that decision because I know he’s going to ask her next. But now that his Mother is tougher than she would be in the first-best world, I must become a bit more lenient in these marginal cases.

Iterate.

(Addendum: If you want to know how to combat these ploys, go ask Josh Gans.)

This time the subjects were in fMRI scanners while they delivered electric shocks for money.

But in FeldmanHall’s study, things actually happened. “There are real shocks and real money on the table,” she said. Subjects lying in an MRI scanner were given a choice: Either administer a painful electric shock to a person in another room and make one British pound (a little over a dollar and a half), or spare the other person the shock and forgo the money. Shocks were priced in a graded manner, so that the subject would earn less money for a light shock, and earn the whole pound for a severe shock. This same choice was given 20 times, and the person in the brain scanner could see a video of either the shockee’s hand jerk or both the hand jerk and the face grimace. (Although these shocks were real, they were pre-recorded.)

The brain scanners are supposed to shed light on the neuroscience of moral behavior.

Even though the findings are “a little bit chilling,” Wager says, “it’s important to know.” These kinds of studies can help scientists figure out how the brain dictates moral behavior. “There’s a real neuroscientific interest now in understanding the basis of compassion,” Wager says. “That’s something we are just starting to address scientifically, but it’s a critical frontier because it has such an impact on human life.”

Barretina bow: Not Exactly Rocket Science.

Two aspects of our taste for good weather are in force in the Spring. First, we enjoy the warmer weather but we have diminishing marginal utility for higher and higher temperatures. Second, we have reference-depenendence: a 40F day feels balmy in March when its been below freezing for the past three months but the same 40F day gives you the chills in May on that day when Winter sends you its final parting gift from the grave.

Given those preferences, here’s how a benevolent Mother Nature would maximize the joy of Spring. Each day raise the temperature by just a little bit. Gobble up the steep part of our utility for warmth but stop before marginal utility declines too much. Then, tomorrow when our reference point adjusts upward pushing the steep part back into play, gobble up those marginal utils again. Repeat. This steady but gradual transition from Winter to Summer would be the hallmark of a benevelont Mother Nature.

But woe is us, here in Chicago our Mother Nature is of a different sort than that. She seems well-acquainted with another aspect of our reference-dependent weather preferences: loss aversion. Drops in temperature hurt more than equal-sized jumps upward. Our Mother has figured out how to exploit this to full effect and minimize the joy of Spring. It all starts in late February when she lays on us a miraculous 60F day right out of nowhere. Our reference points soar. But then we take the plunge back down on the steep side of the loss-aversion curve and the round trip is worse than if we just had another two days of plain old Winter.

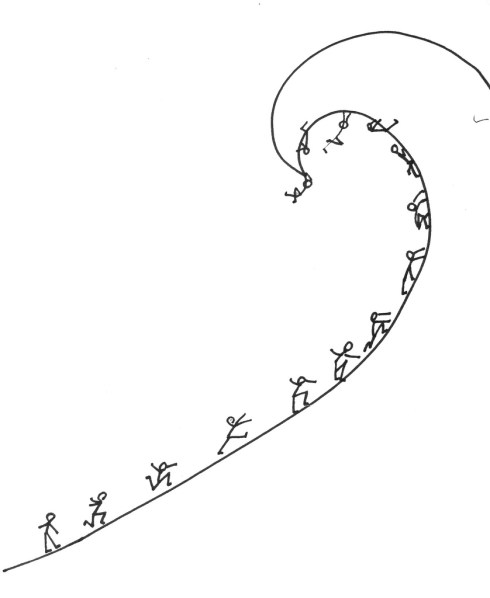

And that pattern pretty much repeats until about June 1. Instead of that gradual steady incline our Spring in Chicago is the classic sawtooth pattern, a series of tragicomic episodes in which our reference points are coaxed upward and then smashed back into place like some kind of meteorologic Moe-Curly routine. Thoughts of summer give us the hope to soldier on, but only if in the past year we were lucky enough to have forgotten whom she hands the baton to once the temperature finally settles down: the mosquitos.

Predict which flights will be overbooked, buy a ticket, trade it in for a more valuable voucher.

Still, there are some travelers who see the flight crunch as a lucrative opportunity. Among them is Ben Schlappig. The 20-year-old senior at the University of Florida said he earned “well over $10,000” in flight vouchers in the last three years by strategically booking flights that were likely to be oversold in the hopes of being bumped.

“I don’t remember the last time I paid over $100 for a ticket,” he boasted. His latest coup: picking up $800 in United flight vouchers after giving up his seat on two overbooked flights in a row on a trip from Los Angeles to San Francisco. Or as he calls it, “a double bump.”

The full article has a rundown of all the tricks you need to know to get into the bumpee business. I was surprised to read this.

Most of those people volunteered to give up their seats in return for some form of compensation, like a voucher for a free flight. But D.O.T. statistics also show that about 1.09 of every 10,000 passengers was bumped involuntarily.

On the other hand, it is not surprising because involuntary bumping only lowers the value of a ticket. Monetary (or voucher) compensation can be recouped in the price of the ticket (in expectation.)

Garrison grab: Daniel Garrett.

A former academic economist and game theorist is now the Chief Economic Advisor in the Ministry of Finance in India. His name is Kaushik Basu. Via MR, here is a policy paper he has just written advising that the giving of bribes should be de-criminalized.

The paper puts forward a small but novel idea of how we can cut down the incidence of bribery. There are different kinds of bribes and what this paper is concerned with are bribes that people often have to give to get what they are legally entitled to. I shall call these ―harassment bribes.‖ Suppose an income tax refund is held back from a taxpayer till he pays some cash to the officer. Suppose government allots subsidized land to a person but when the person goes to get her paperwork done and receive documents for this land, she is asked to pay a hefty bribe. These are all illustrations of harassment bribes. Harassment bribery is widespread in India and it plays a large role in breeding inefficiency and has a corrosive effect on civil society. The central message of this paper is that we should declare the act of giving a bribe in all such cases as legitimate activity. In other words the giver of a harassment bribe should have full immunity from any punitive action by the state.

This is not just crazy talk, there is some logic behind it fleshed out in the paper. If giving a bribe is forgiven but demanding a bribe remains a crime, then citizens forced to pay bribes for routine government services will have an incentive to report the bribe to the authorities. This will discourage harrassment bribery.

The obvious question is whether the bribe-enforcement authority will itself demand bribes. To whom does a citizen report having given a bribe to the bribe authority? At some point there is a highest bribe authority and it can demand bribes with impunity. With that power they can extract all of the reporter’s gains by demanding it as a bribe.

Worse still they can demand an additional bribe from the original harasser in return for exonerating her. The effect is that the harasser sees only a fraction of the return on her bribe demands. This induces her to ask for even higher bribes. Higher bribes means fewer citizens are able to pay them and fewer citizens receive their due government services.

The bottom line is that in an economy run on bribes you want to make the bribes as efficient as possible. That may mean encouraging them rather than discouraging them.

There are a few basic features that Grant Achatz and Nick Kokonas should build into their online ticket sales. First, you want a good system to generate the initial allocation of tickets for a given date, second you want an efficient system for re-allocating tickets as the date approaches. Finally, you want to balance revenue maximization against the good vibe that comes from getting a ticket at a non-exorbitatnt price.

- Just like with the usual reservation system, you would open up ticket sales for, say August 1, 3 months in advance on May 1. It is important that the mechanism be transparent, but at the same time understated so that the business of selling tickets doesn’t draw attention away from the main attractions: the restuarant and the bar. The simple solution is to use a sealed bid N+1st price auction. Anyone wishing to buy a ticket for August 1 submits a bid. Only the restaurant sees the bid. The top 100 bidders get tickets and they pay a price equal to the 101st highest bid. Each bidder is informed whether he won or not and the final price. With this mechanism it is a dominant strategy to bid your true maximal willingness to pay so the auction is transparent, and all of the action takes place behind the scenes so the auction won’t be a spectacle distracting from the overall reputation of the restaurant.

- Next probably wants to allow patrons to buy at lower prices than what an auction would yield. That makes people feel better about the restaurant than if it was always trying to extract every last drop of consumer’s surplus. Its easy to work that into the mechanism. Decide that 50 out of 100 seats will be sold to people at a fixed price and the remainder will be sold by auction. The 50 lucky people will be chosen randomly from all of those whose bid was at least the fixed price. The division between fixed-price and auction quantities could easily be adjusted over time, for different days of the week, etc.

- The most interesting design issue is to manage re-allocation of tickets. This is potentially a big deal for a restaurant like Next because many people will be coming from out of town to eat there. Last-minute changes of plans could mean that rapid re-allocation of tickets will have a big impact on efficiency. More generally, a resale market raises the value of a ticket because it turns the ticket into an option. This increases the amount people are willing to bid for it. So Next should design an online resale market that maximizes the efficiency of the allocation mechanism because those efficiency gains not only benefit the patrons but they also pay off in terms of initial ticket sales.

- But again you want to minimize the spectacle. You don’t want Craigslist. Here is a simple transparent system that is again discreet. After the original allocation of tickets by auction, anyone who wishes to purchase a ticket for August 1 submits their bid to the system. In addition, anyone currently holding a ticket for August 1 has the option of submitting a resale price to the system. These bids are all kept secret internally in the system. At any moment in which the second highest bid exceeds the second lowest resale price offered, a transaction occurs. In that transaction the highest bidder buys the ticket and pays the second-highest bid. The seller who offered the lowest price sells his ticket and receives the second lowest price.

- That pricing rule has two effects. First, it makes it a dominant strategy for buyers to submit bids equal to their true willingness to pay and for sellers to set their true reserve prices. Second, it ensures that Next earns a positive profit from every sale equal to the difference between the second-highest bid and the second-lowest resale price. In fact it can be shown that this is the system that maximizes the efficiency of the market subject to the constraint the market is transparent (i.e. dominant strategies) and that Next does not lose money from the resale market.

- The system can easily be fine-tuned to give Next an even larger cut of the transactions gains, but a basic lesson of this kind of market design is that Next should avoid any intervention of that sort. Any profits earned through brokering resale only reduces the efficiency of the resale market. If Next is taking a cut then a trade will only occur if the gains outweigh Next’s cut. Fewer trades means a less efficient resale market and that means that a ticket is a less flexible asset. The final result is that whatever profits are being squeezed out of the resale market are offset by reduced revenues from the original ticket auction.

- The one exception to the latter point is the people who managed to buy at the fixed price. If the goal was to give those people the gift of being able to eat at Next for an affordable price and not to give them the gift of being able to resell to high rollers, then you would offer them only the option to sell back their ticket at the original price (with Next either selling it again at the fixed price or at the auction price, pocketing the spread.) This removes the incentive for “scalpers” to flood the ticket queue, something that is likely to be a big problem for the system currently being used.

- A huge benefit of a system like this is that it makes maximal use of information about patrons’ willingness to pay and with minimal effort. Compare this to a system where Next tries to gauge buyer demand over time and set the market clearing price. First of all, setting prices is guesswork. An auction figures out the price for you. Second, when you set prices you learn very little about demand. You learn only that so many people were willing to pay more than the price. You never find out how much more than that price people would have been willing to pay. A sealed bid auction immediately gives you data on everybody’s willingness to pay. And at every moment in time. That’s very valuable information.

Jonah Lehrer writes about how bad NFL teams are at drafting talented players, particularly at the quarterback position.

Despite this advantage, however, sports teams are impressively amateurish when it comes to the science of human capital. Time and time again, they place huge bets on the wrong players. What makes these mistakes even more surprising is that teams have a big incentive to pick the right players, since a good QB (or pitcher or point guard) is often the difference between a middling team and a contender. (Not to mention, the player contracts are worth tens of millions of dollars.) In the ESPN article, I focus on quarterbacks, since the position is a perfect example of how teams make player selection errors when they focus on the wrong metrics of performance. And the reason teams do that is because they misunderstand the human mind.

He talks about a test that is given to college quarterbacks eligible for the NFL draft to test their ability to make good decisions on the field. Evidently this test is considered important by NFL scouts and indeed scores on this test are good predictors of whether and when a QB will be selected in the draft.

However,

Consider a recent study by economists David Berri and Rob Simmons. While they found that Wonderlic scores play a large role in determining when QBs are selected in the draft — the only equally important variables are height and the 40-yard dash — the metric proved all but useless in predicting performance. The only correlation the researchers could find suggested that higher Wonderlic scores actually led to slightly worse QB performance, at least during rookie years. In other words, intelligence (or, rather, measured intelligence), which has long been viewed as a prerequisite for playing QB, would seem to be a disadvantage for some guys. Although it’s true that signal-callers must grapple with staggering amounts of complexity, they don’t make sense of questions on an intelligence test the same way they make sense of the football field. The Wonderlic measures a specific kind of thought process, but the best QBs can’t think like that in the pocket. There isn’t time.

I have not read the Berri-Simmons paper but inferences like this raise alarm bells. For comparison, consider the following observation. Among NBA basketball players, height is a poor predictor of whether a player will be an All-Star. Therefore, height does not matter for success in basketball.

The problem is that, both in the case of IQ tests for QBs and height for NBA players, we are measuring performance conditional on being good enough to compete with the very best. We don’t have the data to compare the QBs who are drafted to the QBs who are not and how their IQ factors into the difference in performance.

The observable characteristic (IQ scores, height) is just one of many important characteristics, some of which are not quantifiable in data. Given that the player is selected into the elite, if his observable score is low we can infer that his unobservable scores must be very high to compensate. But if we omit those intangibles in the analysis, it will look like people with low scores are about as good as people with high scores and we would mistakenly conclude that they don’t matter.

- Junior Ghadaffi’s US Tour, interrupted by war. (Sandeep blogged this yesterday, but you may have missed it.)

- Bacon cologne.

- Early Jim Henson.

- “I think I no how to make people or animals alive.”

- Scott Adams rant.

- Your rental car dashboard tells you what side the gas cap is on.

- My birthdate appears twice in the first 200000000 digits of pi.

The Boston Globe profiles Al Roth, who together with Atila Abdulkadiroglu, Tayfun Sonmez, Utku Unver and many others are leading the most important development in Microeconomics right now: market design.

Roth’s most recent project is helping to set up a nationwide kidney exchange, which would make it possible to find even more matches than the existing regional networks can find on their own. Running this national network has been a bureaucratic nightmare, and since it opened for business last fall, only two transplants have actually been carried out under its auspices. The problem is that depending on blood type, it can be hard or easy to find someone a compatible kidney. And when a hospital has an easy-to-match patient, its administrators are more likely to withhold that information from the other hospitals in the network because they’d rather do the transplant themselves, and get the business.

Alex Tabarrok wrote a thought-provoking piece on some ideas to increase kidney donation.

A distinguished colleague (whom I will spare the outing) teaches in the lecture room after me. I received this email from him:

Subject: Any chance you could erase the Leverdome blackboard?

Or is this a Coase theorem thing?

Not the Coase Theorem, no. The Coase Theorem is all about parties coming together to form agreeements that enhance welfare. No, my dust-bound comrade this is much simpler seeing as how aggregate welfare is improved by the unilateral deviation of a single agent, namely me.

You see those days when we were following the conventional norm, according to which each Professor erases the chalkboard after his own lecture leaving a clean board for the next class, we were leaving a free lunch just sitting there on the table. Because any one of us could have changed course, leaving the board to be erased by the next guy before his class, thus triggering a switch to the superior erase-before convention.

Now as I am sure I don’t have to explain to you, once the convention is settled every Professor erases exactly once per day. So nobody is any worse off. But as you have by now noticed, that one particular Professor who initiated the switch avoids erasing that one time and is therefore strictly better off. A Pareto improvement! but of course you are now well-trained at spotting those having just yesterday surveyed my lecture notes covering that very subject as you were erasing them from the Leverdome chalkboard.

From MR, I read this story about how the San Francisco smart parking meters will be designed to adjust meter rates in real time according to demand. There wasn’t much detail there but this bit gave me pause.

Rates at curbside meters in the project area will be adjusted block by block in an attempt to have at least one parking space available at any time on a given block.

(My approach to blogging is to send myself emails whenever I have an idea, then sort through those emails when i have the time and decide what to write about. Some ideas have gathered dust over the past year and its time to use them or lose them.)

When do you give up on a book? It’s an optimal stopping problem with an experimentation aspect. The more you read the more uncertainty gets resolved the more you learn whether the book will be rewarding enough to finish. You stop reading when the expected continuation value, which includes the option value of quitting later, falls below the value of the next book in your queue.

So here’s an interesting question. Is that more likely to happen at the beginning or near the end of a book? Ignore the irrational desire to complete a book just because you have already sunk a lot of time into reading it. (But do include the payoff from finding out what happens with all the threads you have followed along the way.)

It easily could be that the most likely time to quit reading a book is close to the end. Indeed the following is a theorem. For any belief about the flow value of the book going forward, if that belief leads you to dump the book near the beginning, then that same belief must lead you to dump the book nearer the end. Because the closer to the end of the book the option value is lower and there is even less chance that it will get better.

It sounds wrong because probably even the most ruthless book trashers rarely quit near the end. But there’s no contradictipon. Even if the option value rule implies that the threshold quality required to continue reading is increasing as you get deeper into the book, it can still be true that statistically you most often quit reading near the beginning of a book. Because conditional on a book being dump-worthy, you are more likely to figure that out and cross that threshold for the first time early on rather than later.

Turing Test #N-1: detect sarcasm:

“Sarcasm, also called verbal irony, is the name given to speech bearing a semantic interpretation exactly opposite to its literal meaning.” With that in mind, they then focussed on 131 occurrences of the phrase“yeah right” in the ‘Switchboard’ and ‘Fisher’ recorded telephone conversation databases. Human listeners who sifted the data found that roughly 23% of the “yeah right”s which occurred were used in a recognisably sarcastic way. The lab’s computer algorithms were then ‘trained’ with two five-state Hidden Markov Models (HMM) and set to analyse the data – and the programmes performed relatively well, successfully flagging some 80% of the sarky “yeah right”s.

That’s pretty good, but I’ll wait around for the computers to pass the Nth and ultimate Turing Test: compose a joke that is actually funny.

Honestly if we had to rank tests of similarity to human interaction, I believe that composing original humor is probably the very last one computers will solve. (Restricting attention to the usual thought experiment where the subject you are interacting with is in another room and you have to judge whether it is a human or a computer just on the basis of text-based interaction.)

I am always writing about athletics from the strategic point of view: focusing on the tradeoffs. One tradeoff in sports that lends itself to strategic analysis is effort vs performance. When do you spend the effort to raise your level of play and rise to the occasion?

My posts on those subjects attract a lot of skeptics. They doubt that professional athletes do anything less than giving 100% effort. And if they are always giving 100% effort, then the outcome of a contest is just determined by gourd-given talent and random factors. Game theory would have nothing to say.

We can settle this debate. I can think of a number of smoking guns to be found in data that would prove that, even at the highest levels, athletes vary their level of performance to conserve effort; sometimes trying hard and sometimes trying less hard.

Here is a simple model that would generate empirical predictions. Its a model of a race. The contestants continuously adjust how much effort to spend to run, swim, bike, etc. to the finish line. They want to maximize their chance of winning the race, but they also want to spend as little effort as necessary. So far, straightforward. But here is the key ingredient in the model: the contestants are looking forward when they race.

What that means is at any moment in the race, the strategic situation is different for the guy who is currently leading compared to the trailers. The trailer can see how much ground he needs to make up but the leader can’t see the size of his lead.

If my skeptics are right and the racers are always exerting maximal effort, then there will be no systematic difference in a given racer’s time when he is in the lead versus when he is trailing. Any differences would be due only to random factors like the racing conditions, what he had for breakfast that day, etc.

But if racers are trading off effort and performance, then we would have some simple implications that, if it were born out in data, would reject the skeptics’ hypothesis. The most basic prediction follows from the fact that the trailer will adjust his effort according to the information he has that the leader does not have. The trailer will speed up when he is close and he will slack off when he has no chance.

In terms of data the simplest implication is that the variance of times for a racer when he is trailing will be greater than when he is in the lead. And more sophisticated predictions would follow. For example the speed of a trailer would vary systematically with the size of the gap while the speed of a leader would not.

The results from time trials (isolated performance where the only thing that matters is time) would be different from results in head-to-head competitions. The results in sequenced competitions, like downhill skiing, would vary depending on whether the racer went first (in ignorance of the times to beat) or last.

And here’s my favorite: swimming races are unique because there is a brief moment when the leader gets to see the competition: at the turn. This would mean that there would be a systematic difference in effort spent on the return lap compared to the first lap, and this would vary depending on whether the swimmer is leading or trailing and with the size of the lead.

And all of that would be different for freestyle races compared to backstroke (where the leader can see behind him.)

Finally, it might even be possible to formulate a structural model of an effort/performance race and estimate it with data. (I am still on a quest to find an empirically oriented co-author who will take my ideas seriously enough to partner with me on a project like this.)

Drawing: Because Its There from www.f1me.net

This is a plate of green curry chicken that I ate at a KFC in Chiang Mai. (Sorry about the shoddy phone-photos.) It cost 59 baht (about $1.90), it was delivered to my table by a waiter, and it wasn’t surprisingly good, or not bad considering, but legitimately excellent.

Think this is an unfair comparison? Even setting aside all the local adaptations, like the baby pearl eggplant (those aren’t peas), fresh chili pepper, and lashings of canonical green curry, the chicken alone was crisper and juicier than any I’ve ever had at a KFC in America. That chicken thigh was split open, dusted in whatever magical substance they use to give it that scaly crust, fried to a crackle, and sent right to my table without ever seeing a heat lamp.